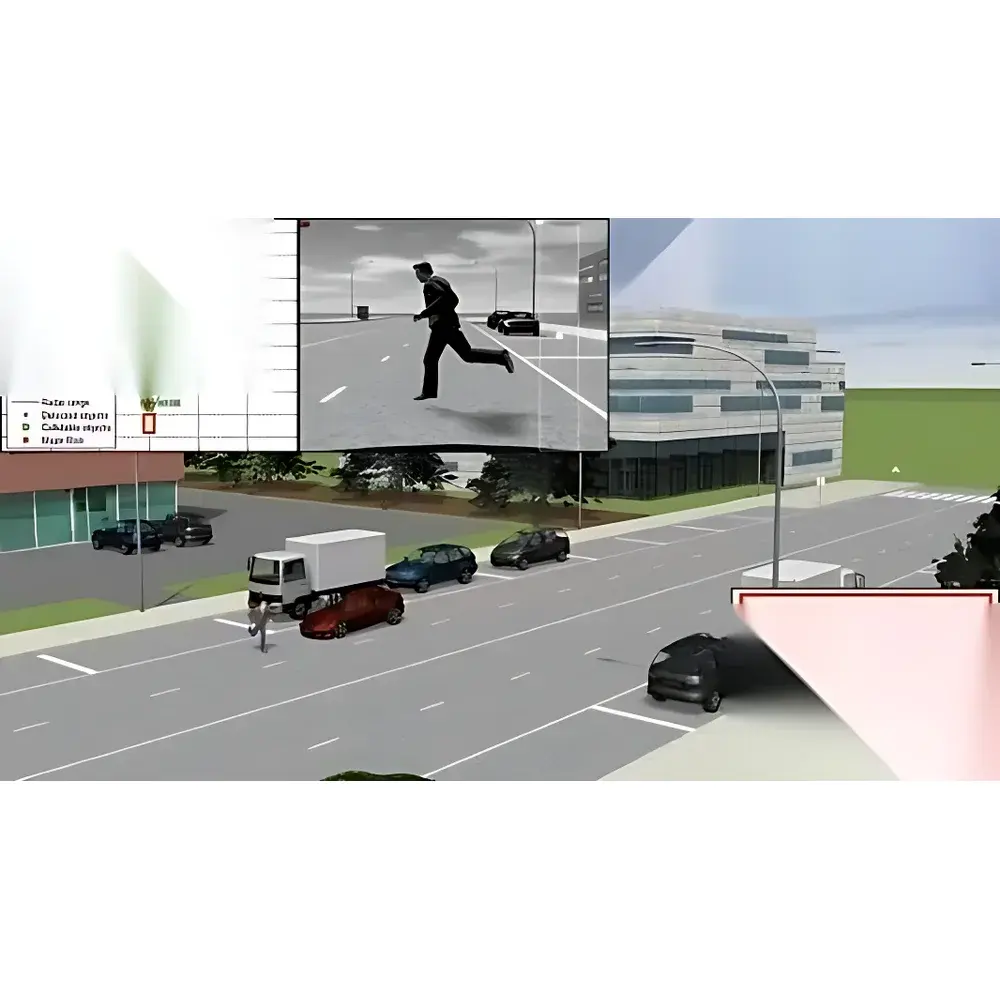

ANSYS VRXPERIENCE Autonomous Driving Simulation Platform

| Brand | ANSYS (USA) |

|---|---|

| Origin | Imported |

| Model | VRXPERIENCE with SCANeR™ Studio Integration |

| Compliance | ISO 26262 ASIL-ready architecture, SAE J3016 Level 3–5 validation support, FDA 21 CFR Part 11–compatible audit trail in data logging modules |

| Software Architecture | FMI 2.0 compliant, C/C++/Python API, Simulink & SCATE/Simulink Real-Time integration, ROS 2 Foxy+ middleware support |

| Rendering Engine | Physically Based Rendering (PBR) with ray-tracing, spectral light modeling (IES/XMP/Spectrum), BRDF/BSDF material database |

| Sensor Simulation | EMVA 1288–compliant camera model, LiDAR point cloud generation (rotating/scanning), HFSS-based mmWave radar ROM co-simulation |

Overview

The ANSYS VRXPERIENCE Autonomous Driving Simulation Platform is a physics-based, full-stack virtual validation environment engineered for rigorous development and certification of ADAS and autonomous driving systems (SAE Levels 3–5). Built upon the validated SCANeR™ Studio simulation kernel from AVSimulation and tightly integrated with ANSYS’ multiphysics solvers—including HFSS for electromagnetic radar modeling, optically calibrated OMS material characterization, and high-fidelity vehicle dynamics—the platform delivers deterministic, traceable, and repeatable closed-loop testing across millions of edge-case scenarios. Unlike empirical or game-engine–based simulators, VRXPERIENCE implements first-principles modeling: photorealistic illumination via spectral ray-tracing, surface optical properties derived from measured BSDF functions, sensor response governed by physical optics and semiconductor physics (per EMVA 1288), and radar echo generation via reduced-order models (ROM) extracted from 3D full-wave electromagnetic simulations. This enables correlation-driven validation—where simulated sensor outputs (RAW images, point clouds, radar range-Doppler maps) exhibit quantifiable fidelity against real-world ground truth under controlled environmental variables (sun angle, road reflectance, weather attenuation, lens aberration). The platform supports regulatory-aligned test workflows including ISO PAS 21448 (SOTIF), UL 4600, and NHTSA AV TEST guidelines, with built-in scenario coverage metrics and traceability matrices for functional safety audits.

Key Features

- Modular, open-architecture simulation framework supporting Model-in-the-Loop (MIL), Software-in-the-Loop (SIL), Hardware-in-the-Loop (HIL), and Driver-in-the-Loop (DIL) configurations

- Physics-accurate 3D scene construction with georeferenced OpenStreetMap import, parametric road network editor (lanes, curvature, elevation, signage), and procedural asset placement (buildings, vegetation, infrastructure)

- Semantic traffic scripting engine enabling behavior-defined agents (vehicles, pedestrians, cyclists) with configurable interaction logic, rule-based decision trees, and Python/C++ extensibility

- Vehicle dynamics integration via FMI 2.0, supporting CarSim, veDYNA, and custom multi-body models; includes pre-validated test datasets for acceleration, braking, cornering, crosswind, and obstacle avoidance

- Multi-sensor co-simulation: EMVA 1288–compliant camera model (lens distortion, quantum efficiency, noise sources, windshield refraction), scanning/rotating LiDAR point cloud generation, and HFSS-derived mmWave radar ROM for realistic RF propagation and clutter modeling

- Headlamp optical simulation module (ANSYS Headlamp) with IES/spectral light source definition, photometric analysis (illuminance maps, isocandela contours), and dynamic ADB/AFS/Matrix LED control validation per ECE R112 and IIHS nighttime test protocols

Sample Compatibility & Compliance

VRXPERIENCE is designed for compliance-critical validation environments requiring auditable traceability and reproducibility. It supports ISO 26262 Part 6 tool qualification evidence packages and integrates with requirements management tools (e.g., IBM DOORS, Jama Connect) via standardized APIs. All simulation logs include timestamped, immutable metadata: environmental state vectors (solar zenith, humidity, precipitation rate), sensor configuration parameters (exposure time, gain, FOV), and vehicle kinematic states (yaw rate, lateral acceleration, wheel slip ratio). Data export formats conform to ASAM OSI, ASAM OpenSCENARIO 1.0+, and IEEE 1178–compliant HDF5 containers. For regulated industries, the platform’s audit trail mechanism satisfies FDA 21 CFR Part 11 electronic record/electronic signature requirements when deployed on validated IT infrastructure. Vehicle models comply with ISO 8855 reference frames, and sensor mounting geometry adheres to SAE J2945/1 specification for ADAS sensor placement.

Software & Data Management

The platform employs a distributed, scalable architecture deployable on-premise HPC clusters or Microsoft Azure cloud infrastructure. Scenario versioning, parameter sweep automation, and batch execution are managed through VRXPERIENCE Scenario Manager—a web-based orchestration interface supporting CI/CD pipeline integration (Jenkins, GitLab CI). Raw sensor outputs are stored in compressed, indexed HDF5 archives with embedded calibration metadata. Post-processing tools include automated perception metric computation (mAP, IoU, false positive/negative rates), temporal consistency analysis for sensor fusion algorithms, and SOTIF hazard exposure scoring. All user-defined scripts (Python, C++) execute within sandboxed runtime environments with deterministic memory allocation and cycle-accurate timing guarantees. Integration with ANSYS Twin Builder enables co-simulation of embedded ECU software with plant models, while Simulink Real-Time targets support deterministic HIL execution at ≤10 ms step size.

Applications

VRXPERIENCE serves as the foundational validation layer across the autonomous vehicle development lifecycle: early-stage algorithm robustness testing under synthetic weather degradation (fog scattering models, rain streak artifacts); sensor fusion validation using synchronized multi-modal outputs (camera + LiDAR + radar timestamps aligned to µs precision); regulatory submission evidence generation for UN-R157 (ALKS) and FMVSS No. 135 compliance; human-machine interface (HMI) evaluation in DIL setups with motion platforms and VR headsets; and headlamp adaptive beam pattern verification against ECE R112 photometric limits. Automotive OEMs and Tier 1 suppliers use the platform for regression testing across firmware releases, corner-case stress testing (e.g., low-SNR radar detection in heavy rain), and safety argument development per ISO/PAS 21448. Academic research groups leverage its open APIs for reinforcement learning policy training in physically grounded environments where reward signals derive from measurable physical quantities—not heuristic proxies.

FAQ

Does VRXPERIENCE support real-time hardware-in-the-loop (HIL) execution?

Yes—when deployed on deterministic real-time OS (QNX, VxWorks) with FPGA-accelerated sensor rendering, the platform achieves sub-10 ms loop latency for camera/LiDAR output generation synchronized to vehicle bus signals (CAN FD, Ethernet AVB).

Can third-party vehicle dynamics models be imported?

Yes—via Functional Mock-up Interface (FMI) 2.0 standard. Verified integrations include IPG CarMaker, dSPACE ASM, and custom Simscape Driveline models.

How is sensor physical accuracy validated?

Sensor models are validated against manufacturer datasheets and lab-measured performance curves (e.g., camera MTF, LiDAR range noise floor, radar angular resolution). BRDF/BSDF material properties are sourced from ANSYS OMS hardware measurements or certified databases (e.g., MERL, SIGGRAPH materials).

Is cloud deployment supported?

Yes—fully containerized (Docker/Kubernetes) deployment is certified for Microsoft Azure GPU instances (NCv3/NV series) and supports autoscaling for massive parallel scenario execution.

What map formats are natively supported?

OpenDRIVE (.xodr), CityEngine CGA rules, OpenStreetMap XML/PBF, and HD map formats from HERE, TomTom, and NDS. GIS terrain data (GeoTIFF, DEM) imports via SCANeR™ Terrain Mode.