ErgoSIM Architectural Acoustic Environment Simulation & Human Behavioral Research Laboratory

| [Brand | Kingfar |

|---|---|

| Model | ErgoSIM Acoustic Environment Simulation Lab |

| Type | Integrated Human Factors Research Laboratory |

| Core Platform | ErgoLAB Human-Machine-Environment Synchronized Cloud Platform |

| Certification | CE, FCC, RoHS, ISO 9001/14001/OHSAS 18001 |

| Compliance | Supports GLP/GMP-aligned data integrity, 21 CFR Part 11–ready audit trails (via ErgoLAB software), ASTM E336/E1573/E2235 for acoustic perception testing] |

Overview

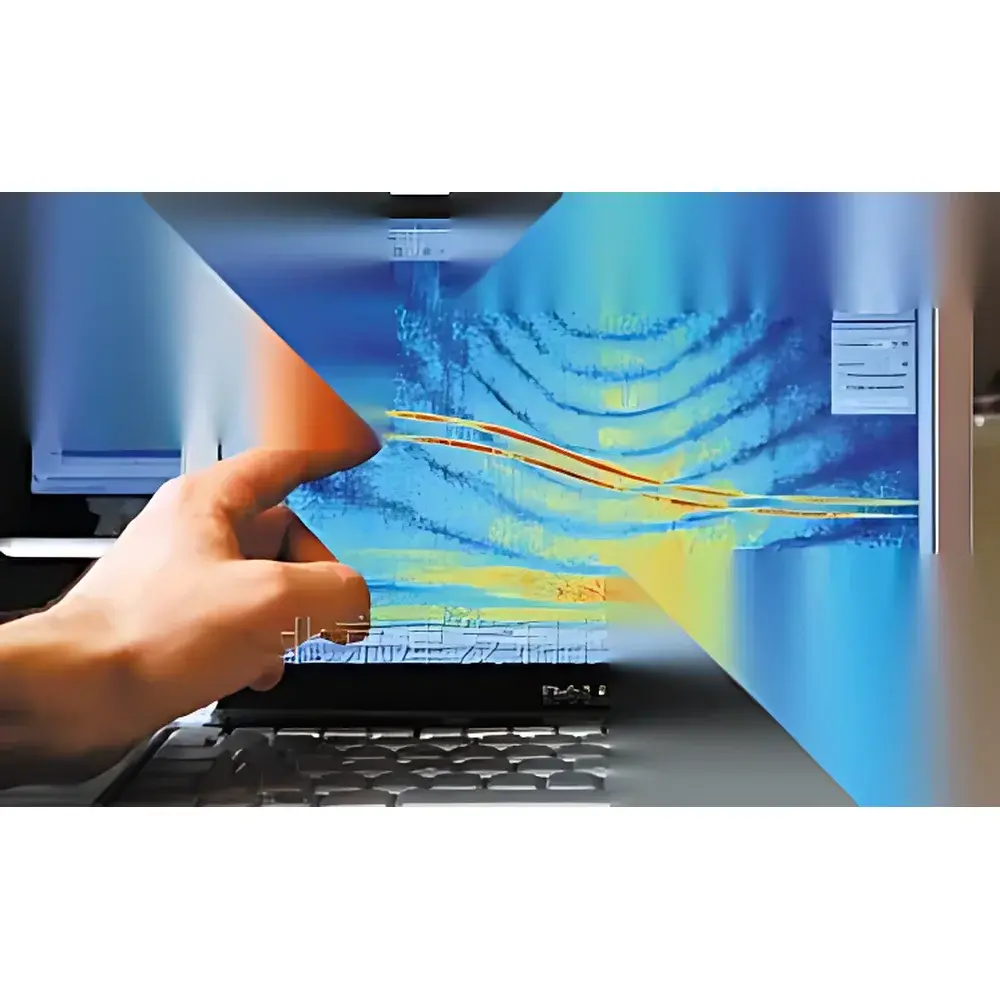

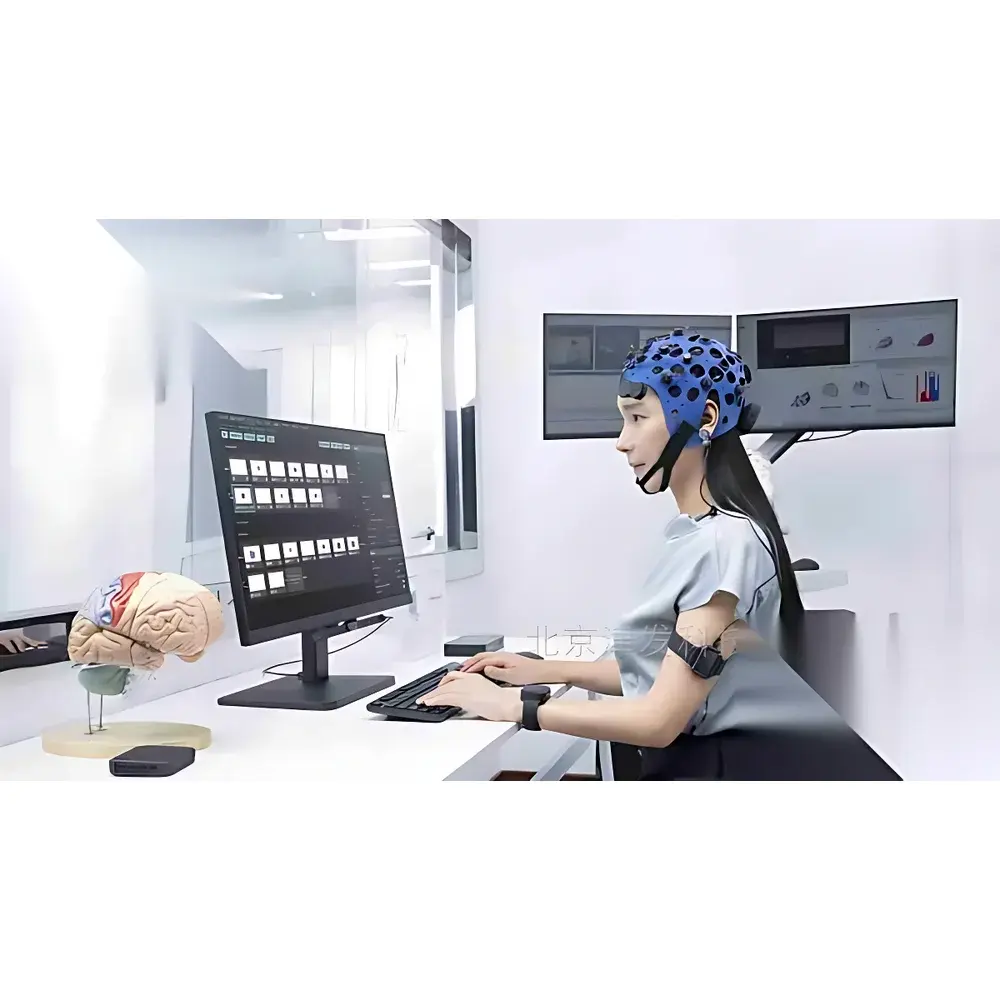

The ErgoSIM Architectural Acoustic Environment Simulation & Human Behavioral Research Laboratory is a purpose-built, integrated experimental infrastructure engineered for evidence-based design research at the intersection of architecture, environmental psychology, and human factors engineering. Unlike conventional acoustic test chambers or isolated sound measurement setups, this laboratory implements a closed-loop, multimodal synchronization paradigm grounded in the “Human–Building–Environment” and “Human–Information–Physical System” theoretical frameworks. It enables controlled, ecologically valid simulation of architectural acoustic environments—including façade noise transmission, indoor reverberation profiles, HVAC-induced tonal components, spatial sound distribution in atria or open-plan offices, and restorative soundscape elements—while simultaneously capturing high-temporal-resolution physiological, behavioral, cognitive, and subjective responses from human participants. The system’s foundational architecture centers on the ErgoLAB Human-Machine-Environment Synchronized Cloud Platform, which ensures microsecond-level time alignment across heterogeneous data streams: physical acoustic signals (dB(A), RT60, STI, loudness in sone), biometric signals (EEG, fNIRS, EDA, HRV, EMG), oculomotor metrics (fixation duration, saccade amplitude, pupil dilation), motion kinematics (via optical or inertial motion capture), and real-time behavioral annotations (via video-coded interaction logs or VR controller telemetry). This synchronized acquisition supports causal inference between acoustic stimulus parameters and human response dynamics—critical for validating design hypotheses in aging-in-place environments, therapeutic landscape architecture, hospital acoustics optimization, and neurodiverse-inclusive building standards.

Key Features

- 3D Binaural Sound Playback & Spatial Audio Rendering: Integrates HRTF-based virtual auditory space generation with real-time head-tracking for ecologically valid immersive soundfield reproduction in both seated and ambulatory conditions.

- Psychoacoustic Parameter Quantification: Automated computation of ISO 532-1 loudness (Zwicker method), ISO 532-2 sharpness (Aures model), fluctuation strength, roughness, and tonality indices—directly linked to concurrent EEG spectral power (e.g., alpha suppression), pupillary response latency, and facial action unit (AU4, AU12) activation.

- Subjective Listening Test Frameworks: Configurable implementation of standardized psychometric protocols including Direct Scaling, MUSHRA (ITU-R BS.1534), Absolute Category Rating (ACR), and Paired Comparison—fully integrated with ErgoLAB’s randomized trial engine and blinding logic.

- Multi-Modal Synchronization Engine: Hardware-triggered timestamping (≤10 µs jitter) across acoustic playback, physiological amplifiers, eye trackers, motion capture systems, and environmental sensors (temperature, humidity, illuminance, CO₂).

- Cloud-Native Data Management: Secure, role-based access to raw and processed datasets via web browser; supports DICOM-compatible storage for fNIRS/EEG, BIDS-compliant metadata tagging, and automated export to MATLAB, Python (MNE, Pandas), or SPSS.

- AI-Enhanced Behavioral Analytics: Pre-trained deep learning models for gaze-behavior mapping (e.g., visual attention allocation to noise sources), stress-state classification (using EDA+HRV+respiratory rate fusion), and acoustic preference prediction (based on demographic + cognitive profile inputs).

Sample Compatibility & Compliance

The laboratory accommodates diverse participant cohorts—including older adults (≥65 years), individuals with hearing impairment (with configurable hearing loss simulation filters), neurodivergent populations (ASD, ADHD), and clinical rehabilitation groups—without requiring invasive instrumentation. All acoustic stimuli comply with IEC 61672-1 Class 1 sound level meter calibration traceability. Subjective evaluation protocols adhere to ITU-T P.800 and ISO/IEC 20247 for human-centered audio quality assessment. Data handling conforms to GDPR Article 32 technical safeguards and supports FDA 21 CFR Part 11 compliance through ErgoLAB’s electronic signature workflow, immutable audit logs, and user-access history retention. Physical environment monitoring subsystems meet ISO 7730 (thermal comfort), ISO 3382-2 (room acoustic measurement), and EN 12354-4 (sound insulation prediction) requirements.

Software & Data Management

ErgoLAB serves as the unified software backbone, providing modular, license-flexible applications: ErgoLAB Acoustic Perception Module (for real-time psychoacoustic index calculation), ErgoLAB Multimodal Sync Manager (with customizable trigger routing and buffer management), ErgoLAB Behavioral Coding Studio (supporting ASL, ELAN, and custom ontology-based annotation), and ErgoLAB AI Analytics Workbench (featuring transfer learning pipelines for cross-cohort generalization). All modules generate FAIR-compliant outputs (Findable, Accessible, Interoperable, Reusable) with embedded provenance metadata (instrument models, firmware versions, calibration dates, environmental baselines). Raw data archives are stored in compressed HDF5 format with SHA-256 checksums; processed derivatives include CSV exports for statistical modeling and JSON-LD for semantic graph integration.

Applications

- Evidence-Based Design Validation: Quantifying impact of façade material selection, ceiling absorber placement, or HVAC duct silencing on occupant alertness (EEG theta/beta ratio), speech intelligibility (STI vs. task performance), and perceived restorativeness (PRS scale + fNIRS prefrontal oxygenation).

- Aging-In-Place Environments: Assessing how low-frequency rumble reduction affects gait stability (inertial motion capture + EMG co-contraction index) and fall-risk-associated startle reflex modulation (EDA rise time + blink latency).

- Therapeutic Landscape Architecture: Correlating natural soundscapes (birdsong spectral centroid, water flow entropy) with parasympathetic reactivation (RMSSD increase) and attention restoration (SART omission errors).

- Hospital Acoustic Optimization: Evaluating alarm audibility under background noise (ANSI S3.6 speech discrimination thresholds) while measuring nurse cognitive load (pupillary dilation variance during simulated handover tasks).

- Neurodiversity-Inclusive Design: Characterizing sensory overload thresholds in autistic participants using dynamic range compression curves derived from simultaneous EEG gamma-band power and self-report sliders.

FAQ

Does the system support real-time acoustic parameter adjustment during ongoing experiments?

Yes—via bidirectional OSC (Open Sound Control) integration between the acoustic rendering engine and ErgoLAB, enabling stimulus adaptation based on real-time physiological feedback (e.g., increasing masking noise when EDA exceeds threshold).

Can existing third-party hardware (e.g., Biosemi ActiveTwo, Tobii Pro Fusion) be synchronized with ErgoLAB?

Yes—all major biosignal and eye-tracking vendors are supported through documented SDKs, IEEE 11073-10471-compliant HL7 interfaces, or TTL pulse synchronization.

Is source code access available for custom algorithm development?

Kingfar provides documented C++/Python APIs and containerized development environments for validated algorithm integration; proprietary core synchronization firmware remains closed-source per ISO/IEC 27001 controls.

What is the minimum recommended room size for a single test chamber?

For binaural spatial audio fidelity and motion capture volume, ≥4.0 m × 4.0 m × 2.8 m (L×W×H) is required; smaller configurations (≥3.0 m × 3.0 m) are feasible with directional speaker arrays and seated protocols.

How is calibration traceability maintained across acoustic and biometric channels?

Annual NIST-traceable calibration certificates are provided for all acoustic transducers and environmental sensors; biometric devices retain vendor-certified calibration logs synced to ErgoLAB’s metadata repository.