MYS-1 T-Maze Video Tracking System for Rodent Behavioral Analysis

| Origin | Sichuan, China |

|---|---|

| Manufacturer Type | Distributor |

| Origin Category | Domestic |

| Model | MYS-1 |

| Pricing | Upon Request |

Overview

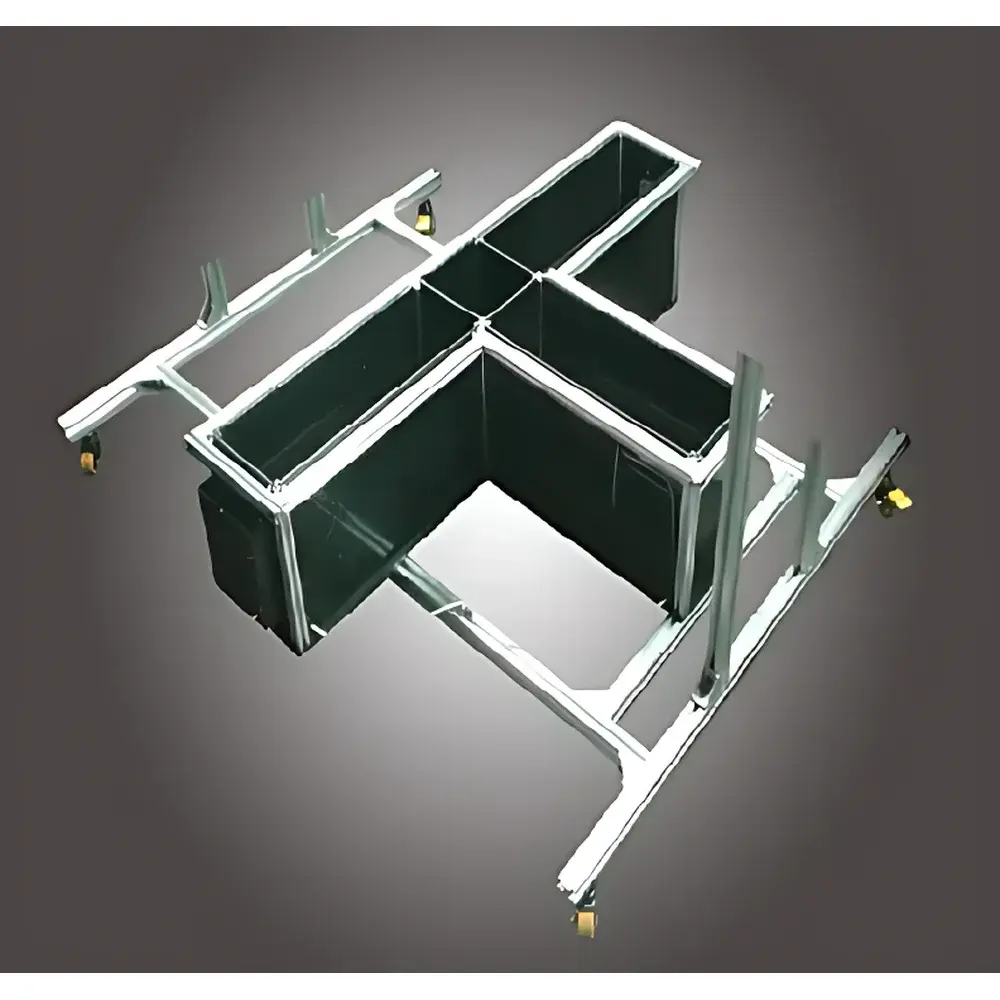

The MYS-1 T-Maze Video Tracking System is a purpose-built behavioral phenotyping platform engineered for quantitative assessment of spatial learning, working memory, and reference memory in rodents—primarily rats and mice. Based on the classical T-maze paradigm, the system leverages natural exploratory drive and food-reward motivation to evaluate hippocampal- and prefrontal cortex-dependent cognitive functions without requiring aversive conditioning or rule acquisition. The apparatus consists of three identical arms arranged in a T-configuration, each terminating in a food reward zone. Spatial memory performance is inferred from objective behavioral metrics—including arm entry sequence, dwell time per arm, latency to first choice, revisitation frequency, path trajectory, and error rate—captured via synchronized high-resolution video tracking and automated event logging. Unlike passive avoidance or fear-conditioning assays, the T-maze paradigm minimizes stress-induced confounds while preserving ecological validity, making it especially suitable for longitudinal studies of neurocognitive decline, pharmacological intervention, or genetic model characterization.

Key Features

- Modular aluminum-frame T-maze structure (arm dimensions: 425 mm L × 150 mm W × 250 mm H) with interchangeable ABS plastic panels; configurable for albino and pigmented rodents via color-optimized flooring and wall inserts.

- Integrated multimodal stimulus delivery: programmable audio cues (frequency/tone/duration), LED visual stimuli (intensity/timing), and calibrated foot-shock via stainless-steel grid floor (voltage range: 5–120 V DC; current range: 0.1–4 mA, constant-voltage or constant-current modes).

- High-fidelity video acquisition subsystem: industrial-grade camera mounted on adjustable tripod, equipped with 3.5–8 mm varifocal lens, diffuse LED illumination array for consistent contrast, and PCIe-based video capture card supporting real-time MPEG-4 compression.

- Automated tracking engine utilizing adaptive color-difference segmentation and dynamic motion modeling—eliminating manual annotation, camera recalibration, or dye marking requirements.

- Real-time trajectory reconstruction with sub-pixel spatial resolution; output includes XY-coordinate time series, velocity vectors, dwell heatmaps, and arm-transition matrices.

- Full software control interface with experiment protocol scripting, stimulus-event synchronization, and hardware-triggered recording start/stop.

Sample Compatibility & Compliance

The MYS-1 system is validated for use with Sprague-Dawley, Wistar, Long-Evans, C57BL/6, and BALB/c rodent strains across standard weight and age ranges (e.g., 200–350 g rats; 20–30 g mice). All materials comply with ISO 10993-5 (biocompatibility) and RoHS Directive 2011/65/EU for restricted substances. The stimulus delivery subsystem meets IEC 60601-1 electrical safety standards for laboratory equipment. Experimental protocols align with NIH Guide for the Care and Use of Laboratory Animals and AAALAC International accreditation requirements. Data export formats (CSV, XML) support integration into GLP-compliant workflows, and audit-trail functionality—including user login logs, parameter change timestamps, and raw video hash verification—is available upon configuration.

Software & Data Management

The proprietary analysis suite operates on Windows 10/11 (64-bit) with minimum system requirements: Intel Core i3-4170 or equivalent (≥2.0 GHz), 4 GB RAM, 1440×900 display resolution, and ≥500 GB SSD storage. All experimental metadata—including subject ID, genotype, drug dose, session date/time, and operator ID—are stored in an embedded SQLite database with ACID-compliant transactions. Video files are archived in time-stamped folders using MPEG-4 Part 2 compression (low bitrate, high temporal fidelity), enabling continuous 24+ hour recording per session. Analytical outputs include: (1) trajectory plots with arm-specific dwell overlays; (2) kinematic summary tables (total path length, mean velocity, arm preference ratios); (3) latency histograms; (4) error-type classification (working vs. reference memory errors); and (5) export-ready CSV files compatible with SPSS, SAS, R, and Python pandas workflows. Role-based access control supports multi-user lab environments.

Applications

- Evaluation of hippocampal-dependent spatial working memory in models of Alzheimer’s disease (e.g., APP/PS1, 3xTg), aging, or transient global ischemia.

- Pharmacological screening of nootropic agents, NMDA receptor modulators, or cholinergic enhancers.

- Functional validation of gene-editing outcomes (e.g., CRISPR-Cas9 knockouts in Drd1, Grin2b, or Bdnf loci).

- Assessment of prefrontal cortical dysfunction in schizophrenia-relevant models (e.g., maternal immune activation, neonatal ventral hippocampal lesion).

- Longitudinal monitoring of cognitive resilience following environmental enrichment or exercise interventions.

- Standardized endpoint measurement in OECD TG 426 (developmental neurotoxicity) and EPA OPPTS 870.6100 (subchronic neurotoxicity) study designs.

FAQ

What types of memory does the T-maze paradigm specifically assess?

The T-maze evaluates both spatial working memory (short-term retention of recently visited arms) and reference memory (long-term knowledge of rewarded arm location across sessions), depending on task configuration (e.g., forced-choice vs. spontaneous alternation vs. delayed non-match-to-sample).

Is the system compatible with optogenetic or fiber photometry integration?

Yes—the MYS-1 features TTL-compatible digital I/O ports (8-channel input/output) for hardware synchronization with external devices such as laser drivers, LED controllers, or DAQ systems.

Can data be exported in formats compliant with FAIR principles?

Raw videos are stored with embedded EXIF metadata; behavioral metrics are exported as structured CSV with column headers conforming to BIDS-derivatives conventions; full experimental provenance is retained in the SQLite database schema.

Does the software support batch processing of multiple video files?

Yes—through command-line interface (CLI) mode, users can queue unattended analysis of hundreds of sessions with identical parameter sets, including background subtraction thresholds and ROI definitions.

How is calibration performed for spatial accuracy?

A one-time pixel-to-millimeter mapping is established using the built-in checkerboard calibration tool; subsequent experiments inherit this transform unless physical repositioning of the camera occurs.