Panlab SMART 3.0 Behavioral Video Tracking Software Suite

| Brand | Harvard Apparatus |

|---|---|

| Origin | USA |

| Distribution Model | Authorized Distributor |

| Import Status | Imported |

| Compatible Models | All Panlab Hardware Platforms |

| Price Range | USD $6,800 – $13,600 (FOB US) |

Overview

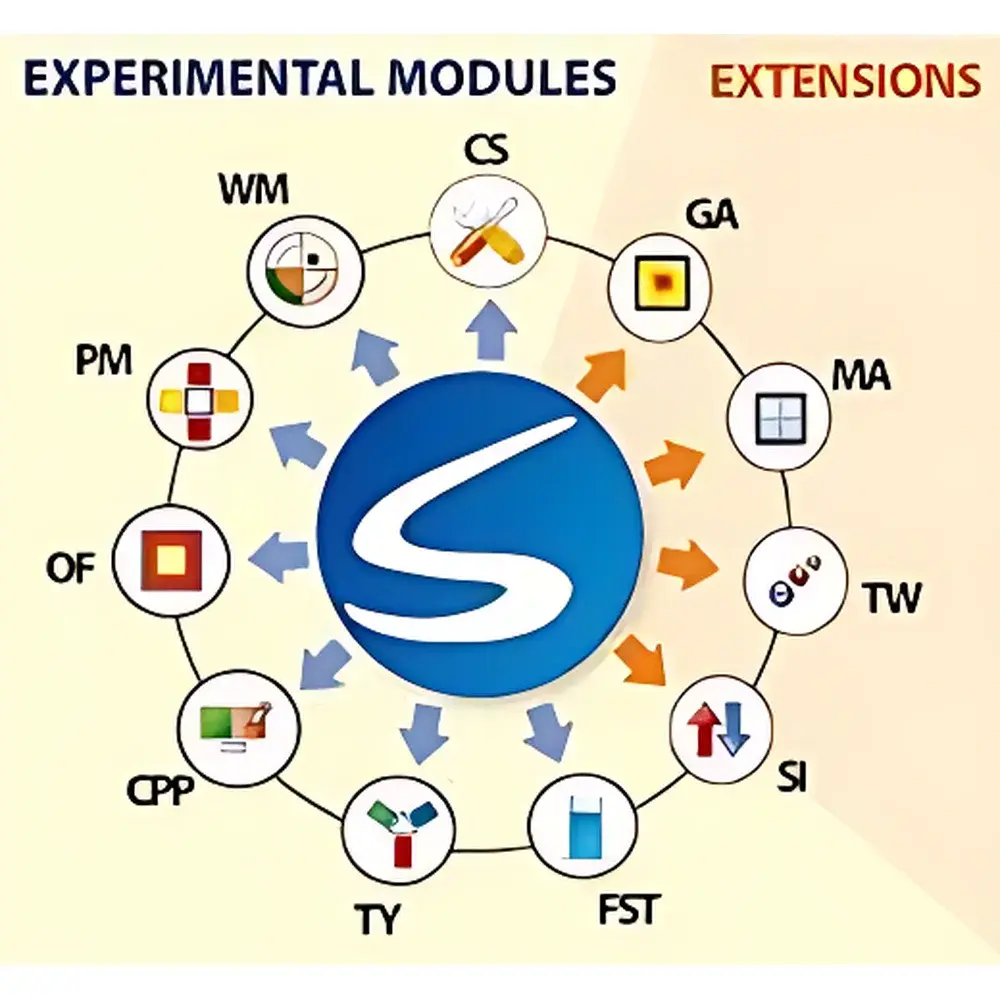

The Panlab SMART 3.0 Behavioral Video Tracking Software Suite is a validated, modular platform engineered for quantitative analysis of rodent and zebrafish behavior in preclinical neuroscience, pharmacology, and translational behavioral research. Developed over 15+ years by Panlab — a brand of Harvard Apparatus (USA) — SMART 3.0 implements frame-difference-based computer vision algorithms combined with patented TriWise™ three-point body segmentation to deliver high-reproducibility trajectory reconstruction under variable lighting and contrast conditions. Unlike conventional centroid-tracking systems, SMART 3.0 distinguishes head, center-of-mass, and tail coordinates in real time, enabling precise quantification of orientation-dependent behaviors including rearing, rotation, stretching, and social proximity metrics. The software operates on standard Windows-based workstations and integrates natively with Panlab’s hardware ecosystem (e.g., LABORAS cages, water maze platforms, elevated plus mazes), supporting both offline batch processing and live acquisition with synchronized event logging.

Key Features

- TriWise™ three-point tracking engine for robust pose estimation across diverse strains, coat colors, and illumination settings

- Modular architecture: Select only required modules (e.g., SMART-WM, SMART-PM, SMART-FST) to align with experimental scope and budget constraints

- Simultaneous multi-animal analysis: Up to 8 subjects in shared arena (SMART-SI); up to 255 independent regions per session (SMART-MA)

- Global Activity (SMART-GA) module employing frame-to-frame differential motion detection — distinct from trajectory-based algorithms — optimized for immobility classification in forced swim, tail suspension, and fear conditioning assays

- Configurable region-of-interest (ROI) definition without geometric presets (SMART-CS), enabling custom maze layouts or irregular arenas

- Digital I/O extension (SMART-IO) for hardware synchronization with external stimuli (electrical foot shock, platform submersion, door actuation)

- Real-time visualization of trajectory plots, heatmaps, 2D/3D activity distribution maps, and temporal behavior histograms

- Export-ready output formats: CSV, Excel-compatible .xls, and structured XML for LIMS or ELN integration

Sample Compatibility & Compliance

SMART 3.0 supports video input from standard USB3/UVC-compliant cameras (including Panlab’s HD-USB and IR-sensitive models), as well as legacy FireWire and GigE Vision sources. It accommodates grayscale and color streams at resolutions up to 1920×1080 @ 60 fps. The software has been validated for use with C57BL/6, BALB/c, Sprague-Dawley, Wistar, and zebrafish larvae (Danio rerio) models. All modules comply with GLP-aligned data integrity requirements: full audit trail (user login, parameter changes, analysis runs), timestamped raw video linkage, and immutable metadata embedding. Exported datasets retain traceability to original acquisition sessions, satisfying documentation expectations under FDA 21 CFR Part 11 (when deployed with validated system configuration and access controls).

Software & Data Management

The SMART 3.0 interface follows a guided workflow paradigm: experiment setup → video import/calibration → ROI definition → tracking parameter optimization → automated analysis → statistical export. Batch processing supports parallel execution across multiple videos with identical configuration profiles. The embedded statistics engine computes standard behavioral endpoints (latency to platform, arm entries, zone dwell time, immobility duration) alongside advanced indices such as spontaneous alternation rate (T/Y maze), preference score (CPP), and proximity index (social interaction). All results are stored in a local SQLite database with relational linking between subject ID, session, module, and raw video file path. Custom report templates can be generated via the Report Designer tool and exported as PDF or HTML for inclusion in study reports or regulatory submissions.

Applications

- Anxiety-related behavior: Elevated plus maze (SMART-PM), open field (SMART-OF), light-dark box, zero maze — quantifying open-arm time, thigmotaxis, center exploration

- Depression-like phenotypes: Forced swim test (SMART-FST), tail suspension test — classifying active vs. immobile bouts with latency and frequency metrics

- Spatial learning & memory: Morris water maze (SMART-WM), Barnes maze, radial arm maze (SMART-TY), T-maze — measuring escape latency, platform crossings, arm choice accuracy

- Recognition memory: Novel object recognition (NOR), object location — calculating discrimination index and exploration time ratios

- Addiction & reward processing: Conditioned place preference (SMART-CPP) — analyzing time spent in drug-paired chambers and transition counts

- Social behavior: Three-chamber sociability, resident-intruder — computing interaction duration, proximity events, and dyadic movement synchrony (SMART-SI)

- Zebrafish larval screening: Multi-well plate assays (SMART-MA) — high-throughput locomotor profiling under pharmacological or genetic perturbation

FAQ

Is SMART 3.0 compatible with third-party video capture hardware?

Yes — SMART 3.0 supports UVC-compliant USB cameras, GigE Vision devices, and legacy IEEE-1394 (FireWire) sources. Camera drivers must be Windows-compatible and expose raw frame buffers.

Can I upgrade from SMART 2.5 to SMART 3.0 without reinstalling my existing modules?

Yes — licensed SMART 2.x users qualify for a paid upgrade path that preserves calibration files, ROI definitions, and historical project databases.

Does SMART 3.0 support automated detection of grooming or freezing behavior?

Freezing is reliably detected via SMART-GA’s motion-threshold algorithm; grooming requires manual annotation or integration with external deep-learning tools (e.g., DeepLabCut), as SMART does not include pose-estimation CNNs.

How is data security handled during multi-user lab deployment?

Each user account enforces role-based permissions (administrator, analyst, viewer); all analysis operations are logged with timestamps, IP addresses (if network-deployed), and operator IDs.

Are validation documents available for GxP-regulated studies?

Harvard Apparatus provides IQ/OQ documentation templates and a vendor-supplied Risk Assessment Report (RAR) aligned with ISO/IEC 17025 and ASTM E2500-13 guidelines for software used in regulated environments.