ZOLIX VR Latency Testing System

| Brand | ZOLIX |

|---|---|

| Origin | Beijing, China |

| Manufacturer Type | Authorized Distributor |

| Origin Category | Domestic (China-made) |

| Model | VR |

| Price | USD 28,000 (approx.) |

Overview

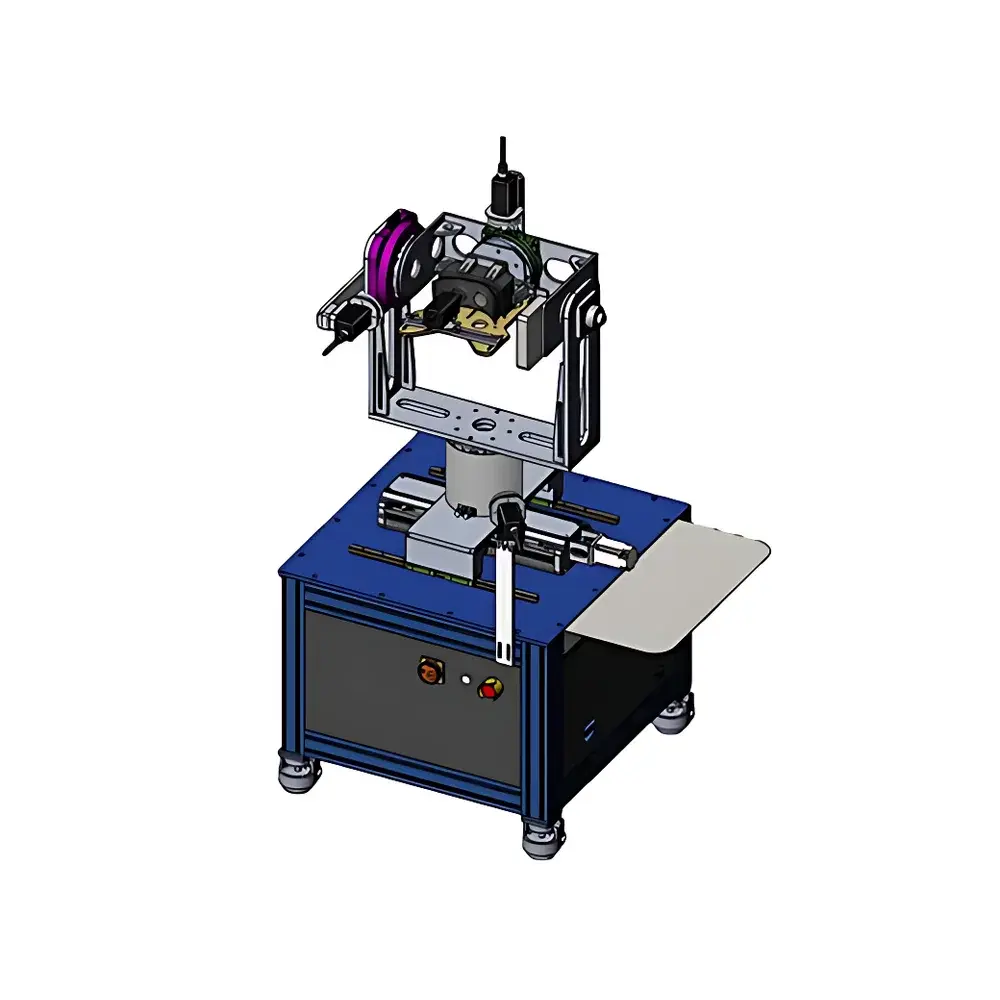

The ZOLIX VR Latency Testing System is a precision optical metrology platform engineered to quantify end-to-end motion-to-photon latency in virtual reality (VR) head-mounted displays (HMDs). It operates on the principle of synchronized high-speed motion capture and image acquisition, replicating human head kinematics—including pitch, yaw, and roll—via a computer-controlled 3-axis rotational stage. Two time-synchronized high-speed cameras record both the mechanical motion of the stage (via calibrated scale markings on rotating axes) and the corresponding dynamic rendering output from the VR display. By extracting displacement-time profiles for both the physical motion trajectory and the visual response using sub-pixel feature tracking, the system computes temporal misalignment with microsecond-level resolution. This approach directly measures perceptual latency without reliance on synthetic test patterns or embedded timing signals, making it suitable for evaluating real-world VR content under realistic user-motion conditions.

Key Features

- Triaxial rotational stage with ±300° continuous rotation per axis (θ₁, φ, ψ), enabling full-range simulation of natural head movement including rapid saccadic motions.

- High-precision angular positioning: <0.1° repeatability; maximum angular velocity up to 180°/s; acceleration up to 360°/s².

- Dual high-speed imaging architecture: One PLT PL2 industrial camera (14-bit, 1 MS/s sampling, analog input synchronization) captures stage motion via scale markers; one CMOS high-speed camera (up to 10,000 fps at reduced ROI, 1600 fps at VGA) records VR display output with programmable shutter (1/50–1/100,000 s).

- Hardware-triggered synchronization: External TTL/analogue trigger inputs ensure sub-millisecond alignment between motion actuation and image capture across both cameras.

- Integrated safety infrastructure: Limit sensors on all axes and emergency stop button compliant with IEC 60204-1 functional safety requirements.

- Modular mechanical interface: Customizable universal fixture plates support diverse HMD form factors (e.g., standalone, PC-tethered, eye-tracking-integrated units).

Sample Compatibility & Compliance

The system accommodates VR headsets with external optical access (e.g., front-facing display viewport or waveguide exit pupil region), supporting devices with diagonal display dimensions up to 250 mm. Mechanical mounting complies with ISO 10360-2 geometric accuracy standards for coordinate measuring machines (CMMs), ensuring traceable stage motion fidelity. Data acquisition and analysis workflows are designed to support GLP-compliant documentation: audit trails, user authentication, electronic signatures, and raw data immutability—all configurable within PLEXLOGplus II software. While not certified to FDA 21 CFR Part 11 out-of-the-box, the software architecture supports validation protocols required for regulated R&D environments (e.g., medical VR device development under ISO 13485).

Software & Data Management

PLEXLOGplus II serves as the unified control and analysis environment. It provides real-time camera preview, hardware-trigger configuration, motion profile scripting (angle/velocity/acceleration ramps), and automated post-capture analysis. Core algorithms include: (1) Sub-pixel centroid tracking of high-contrast features on both motion scale and VR frame sequences; (2) Cross-correlation-based temporal alignment of displacement curves; (3) Piecewise linear fitting to identify onset and offset delays between mechanical stimulus and visual response. The software exports timestamped CSV files containing synchronized position, velocity, acceleration, and pixel-displacement vectors. All raw video, metadata, and analysis logs are stored with SHA-256 checksums for integrity verification. Custom API access (DLL/.NET) enables integration with LabVIEW, MATLAB, or Python-based test automation frameworks.

Applications

- Quantitative evaluation of motion-to-photon latency in consumer and enterprise VR/AR headsets during certification testing.

- Comparative benchmarking of rendering pipelines (e.g., asynchronous timewarp vs. reprojection vs. native frame pacing).

- Validation of low-persistence display technologies and dynamic refresh rate modulation schemes.

- Correlation studies between measured latency and user-reported simulator sickness (SSQ) or task performance metrics.

- Extension to other optomechanical systems: smartphone drop-test impact visualization, MEMS mirror actuation characterization, and optical component thermal drift monitoring.

FAQ

What types of VR headsets can be tested?

Standardized fixtures support most commercially available HMDs with accessible front-facing optics. Custom adapters are available for enterprise or prototype units.

Is the system compatible with eye-tracking-enabled VR devices?

Yes—external optical access is preserved; however, concurrent eye-tracking data must be acquired separately and synchronized via shared trigger signals.

Can latency be measured under variable frame rates (e.g., dynamic VRR)?

Yes—the system captures open-loop motion profiles independent of display refresh, allowing latency assessment across arbitrary frame timing sequences.

Does the software support automated pass/fail reporting per industry benchmarks?

Custom report templates can be configured to compare measured latency against thresholds defined in IEEE Std 2048-2021 (VR/AR system performance criteria) or internal QA specifications.

What calibration procedures are required before testing?

Stage angular calibration uses built-in encoder feedback and optional optical encoder verification; camera spatial calibration employs checkerboard-based homography mapping per ISO 10360-7 Annex D.